Sword Art Online (SAO) was a game-changer when it premiered in 2012. The show was basically a “casting net” to capture bewildered viewers — ones who can’t possibly understand the amazing science and technology unfolding before them through an anime.

Unlike many other sci-fi anime that premiered in the early 2010s, SAO provided “untapped, untouched, but exciting science” for kids, teens, and young adults. In a time when handheld gadgets weren’t even a fad, viewers would wonder, “What the heck am I watching, and why can’t I stop watching it?”

Today, allow us to enlighten your minds through my favourite “crossover” topics: Sword Art Online and virtual worlds! Virtual worlds are practically the exciting locations where SAO took place. Now that we’re almost closing into a decade since the show started, wouldn’t you say it’s about time SAO simulations become “closer to reality” in our era?

1. Quick Answer

Despite the amazing research, intensive scientific developments, and exhilarating discoveries mankind has reached in the past century, it’s still impossible for SAO’s virtual massively multiplayer online role-playing game (VMMORPG) to transpire in our lifetime or even in our great-grandchildren’s time and era. But don’t lose hope as it may still happen to our descendants 6 to 7 decades down the road!

In any case, Fulldive should have been made possible by then if entrepreneurs of private tech companies can dominate the production and distribution of seamless artificial intelligence (AI), sophisticated machine learning (ML), and colossal avatar projects such as the 2045 Initiative(which can be found in this website: 2045 Strategic Social Initiative.

2. MiHoYo’s Genshin Impact as the next SAO

Other than the 2045 Initiative, there are influential games out there that contribute around Augmented Reality (AR) and Virtual Reality (VR). Some of the prominent ones are gacha games and RPGs like Fate/Grand Order, Final Fantasy Brave Exvlus, Genshin Impact, Kemco RPGs, and more! In this section, I’ll focus on miHoYo’s Genshin Impact because it could be the next real-life SAO.

Chinese video game developer miHoYo is sweeping the nation of gamers and players that it is going to make a virtual world through Genshin Impact. The company claims that they are going to create an AR or VR that could “house a billion people by 2030” 😵!

With over 40 million people playing Genshin Impact on their mobile devices since its release in September 2020, it’s no wonder miHoYo is taking the spotlight! To reach that big goal, miHoYo’s small goals include releasing games every 3 to 4 years.

Not only would that increase player count; it will also enable the company to establish offices abroad while ensuring its $800M future profitability and stability in international gaming and VR industries.

If you want to read more about this important news, see the sources below:

- MiHoYo targeting a virtual world!

- Mihoyo Rakes In $800M in 2020

3. Fulldive is Possible

For SAO VMMORPGs to exist in our era, the burning question existing on everyone’s mind is whether Fulldive is possible. Yes. It is possible in the long range, but it will be like seeking the Holy Grail of VR because decades of painstaking science are required.

Here’s a small fun science lesson you can digest while learning about the 3 components needed to acquire Fulldive in the distant future:

4. What is Quantum Computing?

According to Bernard Marr, strategic business & technology advisor of Enterprise Tech, quantum computers use “qubits” to solve complex problems that require more power and time.

Traditional computers (desktop, laptop) and handheld devices (smartphones, tablets, etc.) cannot perform quantum computing because they don’t use atomic and subatomic quantum bit particles (or “qubits”) to store enormous data. Only quantum computers can store large amounts of data to solve the difficult problems because they can revolutionize computer power exponentially while using energy efficiently.

Quantum computing is mandatory when implementing Fulldive. It can power through and speed up the employment of ML tasks (such as the algebraic calculations of infinite integers) when human brains connect to cyborgs. Complex algorithms are needed, hence why a supercomputer’s computations simply won’t work when implementing Fulldive.

5. Internet & Information Exchange

Through the power of fiber-optic cables in 4G and 5G internet, people can streamline videos and watch as many anime shows they can within a day. If we can apply the power and speed of internet connection to cyborgs, imagine how effective and efficient their AI and ML could be!

The faster the processing time, the better the robots can function. In a similar fashion, a VMMORPG would function smoothly because it can download and process large commands between humans and humanoids. There would be less electromagnetic signal interference when walking, running, sitting, eating, and fighting in the virtual world!

It’s all thanks to fiber optics enhancing data transmission up to 25 miles! Since they utilize glass cores to shield the cable’s conductor, an overwhelming amount of data (using light) can be transmitted between the human brain and holographic avatars. This would open users to the powerful internet and transformative information exchange as we know it!

Right now, it’s impossible to apply the power and speed of fiber internet to VRs because:

- fiber optic cables are expensive – producers of virtual reality games need thousands, if not hundreds, of dollars before they can mass-produce a fully functioning VMMORPG; and

- new infrastructure is required – if suppliers of the gaming industry are applying a faster and better connection so their gamers can manipulate their avatars in the VR seamlessly, a newer and more sophisticated infrastructure is mandatory; this will take time if all parties want fiber-optic technology to operate at full capacity.

- Brain Interfacing- Brain interfacing is one of the scientific breakthroughs that mankind has researched about since the 1970s. If you think that it’s simply planting chips on human and animal brains, well, think again. There are pros and cons to brain-computer interfaces, otherwise known as BCI.

First, the pros: if you want visual and tactile experiences through Fulldive, several microelectrode arrays must be implanted onto the brain’s visual cortex and motor cortex.

Not only will that allow VR gamers neural interactions on the artificial worlds; it can even permit them to perform human telepathy through the power of technology! And though this process is also labeled “telecommunication”, it’s awesome when it’s implemented in real life even if it’s only in the VRs!

To perform artificial communication through conscious thoughts, quantum algorithm (hence, quantum computing) and telemetry are mandatory. I’ve discussed quantum computing in the above paragraphs, so the only concept left to explain is telemetry.

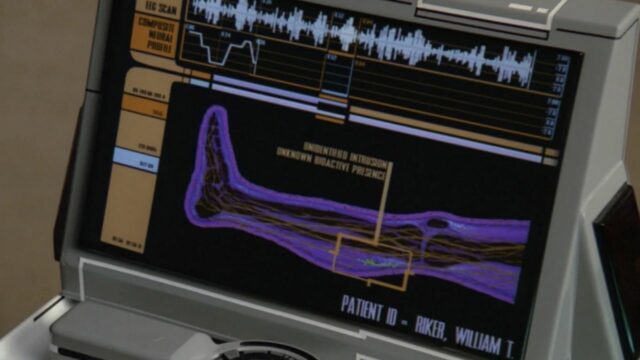

It’s basically BCI’s non-invasive way to send commands or electrical currents to the brain’s motor cortexes. It will allow the avatars in the VR to raise their arms through transcranial magnetic simulation (TMS) or electroencephalography (EEG).

Another way to explain telemetry is that it’s like switching the TV on using the remote control. However, when applied in AIs and MLs, the automatic recordings or transmitted data between the two IT machines performing the complex commands are monitored, analyzed, and controlled from inaccessible or remote locations.

But even if TMS and EEG are non-invasive, they are prohibited by the government. In other words, they cannot be commercially used on humans; therefore, it’s another obstacle before Fulldive can be realized. Legal and commercial applications of TMS and EEG on VMMORPGs is a restriction, and hence, a disadvantage of brain-to-brain interfacing (BBI).

As for EEG (the reader of brainwaves), I will discuss it as a separate topic in another section. See “What are EEGs?” subheading of this blog if you’d like to read more about that.

6. Limitations of Fulldive VR

Three things will limit gamers in experiencing a Fulldive VR requiring SAO VMMORPG-level:

- Our brains must be scanned after it gets plugged in. It must be thoroughly connected to several wires (i.e., microelectrode arrays) of certain neurological helmet devices. As they are meant to invade your brain cells, these devices are outside the safety limits of the TMS and EEG helmets I spoke of earlier.

Not only does this involve serious risk, but invasively accessing one’s brain through neurosurgery is like cutting their brains open. The devices will scan the brain cells or the individual neurons that help us function basic activities like standing and sitting.

As the devices scan, invasive brain access is slowly performed by the machines. This painstaking method requires less computing power to process the few individual scans (or images) it needs. As soon as our brains get invaded, there’s a possibility we might not open our eyes anymore.

Invasive brain access requires Fulldive experience where patients must immerse themselves in the VR world (thus, losing consciousness of reality). In other words, we will be literally stuck to an inescapable world where our brain cells are constantly and continuously invaded! 😰

That’s why modern governments prohibit its use even on patients. It truly is frightening even if gamers simply want a Fulldive AR & VR experience just for entertainment.

- The second criterium is for your nervous systems to go into inactivity mode and for our muscles to go numb. You don’t feel the wooden floor you’re standing on anymore. Your fingers become completely numb and paralyzed as it lays “lifeless” on armchairs. You won’t be able to feel anything.

- The third criterium losing complete consciousness of the physical realm —meaning — going into “Sleep Mode”. You’ll be completely cut off from reality because your brain will be consumed by indolence (another word elevating laziness, apathy, and debauchery). You’re entering in (or rather, “sleeping into”) a dream-like wonderland — the Fulldive Virtual Reality experience itself!

To fully immerse oneself in the AR & VR experience, sacrifices must be made. We must forget “touch”, “taste”, and “smell” so we can slowly but surely leave the physical realm. Therefore, our brains are the only ones operating so we can “see”, “hear”, and to an extent, “feel with our emotions” the Fulldive AR & VR experience.

7. Decades of Painstaking Science & Technology

I know what you’re thinking: why can’t SAO-themed VRs happen sooner? If they can happen sooner, amateur & pro-gamers can be isekai’d into lands of the unknown. Their bodies are going to experience that overwhelming feeling of touching the dessert sands in the same way Kirito was physically and mentally transported into “The World of Swords”.

Well, the simple answer to that is because it takes decades for painstaking science and technology to intimately touch human lives. 🙂 Here we are, after almost ten years since SAO started airing in 2012, and the trends among AR & VR amateur and pro-gamers (and perhaps, SAO fanatics) are:

- VR helmets / VR headsets or head gears;

- VR apps: Google Expeditions, Google Earth VR, Ocean Rift, The Foo Show, Tilt Brush, Fulldive VR

I could only imagine AR & VR literally taking over the world because, after all, AI is programmed to continuously learn. And since they’re constantly learning, they’re upgrading their ML capabilities daily and becoming better thinkers as the creativity and ingenuity of humans degenerate every day.

Humans might find themselves one day merged with AI. Though this means becoming cyborgs, humanity’s convergence with AI is inevitable in a technologically- driven world in the distant future.

Still, one must remember that not all VR apps popped out of the blue in the previous ten years. The prototypes of these VR headsets, gears, and other fancy gadgets have been existing way back since the 1960s. There is one headgear, however, that I’d like to mention that is not used in gaming yet. It’s the ever popular, but also terrifying EEG!

8. What are EEGs?

EEG, or electroencephalograph, is a BCI helmet-like device that detects or records patients’ brainwave patterns. It’s a device incapable of producing any sensation because it’s mostly used for identifying seizure origins and types.

EEGs were incredible devices when they were proposed in 1875 by Richard Caton. But it’s only in 1924 when inventor Hans Berger theorized EEGs utility on humans. Approximately 76 years later (in the early 2000s), EEG prototypes were developed and “EEG feeds” were implanted inside several patients’ brains.

On the contrary, EEG’s inability to produce any sensation does not mean patients will not feel anything. After all, EEG electrodes are placed in an array along a patient’s head or a test subject’s scalp. Still, medical doctors use EEGs widely because patients either “feel little discomfort” or “no discomfort at all” during their medial diagnosis.

Since EEGs are unable to measure thoughts and feelings, electricity waves are not sent into the brain. And because EEGs are not felt due to their incapability to produce sensation, patients do not feel shocks on their scalps or anywhere else on their bodies.

The only people who might feel or sense electric shocks from EEGs are patients who suffer from seizure disorders. Patients like them are an exception; but the worst case they must endure while undergoing through EEGs are its electric shock.

We must remember that EEGs function to detect abnormalities while reading patients’ brain patterns. There’s no risk of getting EEG’s electric shocks except for people who suffer from seizure disorders. When these patients sense or feel electric shocks, they are treated immediately by healthcare providers.

9. EEGs on VRs is Possible

Several STEAM students (science, technological, engineering, arts, and mathematics), computer science, linguists, and psychology university students in different parts of the globe have conducted recent studies on EEG meshing with VR (see external links below).

I do not have full access to the research studies below as they require me to either sign-in or log onto the institution’s websites. However, from what I’ve gathered, their studies show the following:

- Estimating Cognitive Workload in an Interactive Virtual Reality Environment Using EEG

EEGs electrical recordings can be differentiated among users’ mental workload levels when gamers play on an interactive VR task. This means that differences in brain activities occur in virtual environments despite users performing only cognitive tasks (i.e., thinking, learning, remembering, reasoning, etc.).

- EEG as an Input for Virtual Reality

The closest VR experience that EEG can provide is when gamers allowed themselves to be affected dynamically (i.e., allowing EEG’s predefined brainwave patterns to change their emotions, mood, and concentration).

Right now, ethics and laws prohibit technology from completely invading gamers’ brain cells. Therefore, the physical bodies cannot crossover to the digital realm, but EEG’s electrical recordings can reach the gamers’ emotions up to a certain degree (which is still within the “no sensation, no feeling, no touch” boundaries of EEG headsets).

- Immersive EEG: Evaluating Electroencephalography in Virtual Reality

Under certain conditions, combination of EEG and VR is possible even without reducing physical strain on the EEG headset. As a matter of fact, when the VR headsets are customized, the signal quality only shows improvements!

VR users will sense through their nervous systems significant delays. It’s like a backlog or a glitch on your gesture, gaze, speech, facial expressions, etc. because you’re zoning in and out from the virtual environment almost every minute.

- Virtual reality: A game-changing method for the language sciences

VR can be used as an experiential learning tool for language sciences because it allows reproducible science across different research labs and participants.

- The combined use of virtual reality and EEG to study language processing in naturalistic environments

In support to #4 above, combining VR and EEG is very useful for neurophysiological communicative studies because it opens rich, ecological, valid, and natural environments for experiential learning.

- The Use of Portable EEG Devices in Development of Immersive Virtual Reality Environments for Converting Emotional States into Specific Commands.

VR can be used to monitor and control EEG signals, and eventually, the emotions and feelings of VR gamers.

The more relax the test subject is, the better is the theta rhythm across the EEG channels. Theta rhythm are neural oscillations observed in the sleeping state of a mammal’s hippocampus. They are essential for cognitive and behavioural learning such as spatial navigation and memory.

Contradictory, a frightened test subject will increase unwanted beta waves on the brains’ left side (used for information analyses, reading, writing, sequential thinking, etc.). Beta waves appear when a subject is awake because they represent increased concentration, anxiety, tendency bursts, and other busy actions of the subject.

10. Pros of EEGs

For now, EEG is one of the safest tools to measure brain patterns. The benefits involved are greater than the risks. And as such, it greatly benefits the neuroscience industry because EEGs can connect or plug safely to the human neural system.

Like I said above, it’s the closest one I can think of for patients and gamers alike to experience some — SOME — form of virtual reality. Even though the patients are not gamers, they’re utilizing AR & VR in alternative ways that could benefit them.

The patients’ goals are to re-train their nervous systems and muscular systems by walking back and forth inside the hospitals and institutions’ rehabilitation centres. They need to walk again, use their fingers again, feed themselves again with a spoon and fork, etc. All the necessities a normal 7-year-old child can already do, the patients need to relearn so they can heal! 😢

11. Cons of EEGs

On one hand, it’s good that EEG devices are used in the medical industry to help patients. But players wanting to experience Fulldive AR & VR can’t do so yet due to hazardous and ethical reasons. They must wait for years, even decades, just to get to that point.

EEGs are not utilized in the entertainment industry nor in the gaming industry. In the research studies I’ve linked above, all researchers have limited EEG unto reading brainwave patterns alone regardless of the fields used: experiential learning, communicative methods, dynamic use, and cognitive workloads.

EEGs are incapable of sending electricity to the brains. Since EEGs only read brain activities, they cannot make patients feel pain (save for seizure disorder patients who might feel or sense electric shocks) nor execute commands such as sitting, reading, walking, etc. to human bodies.

There is no way for EEGs to control the human brain except through brain-cell intrusion. However, this is equivalent to trespassing. Possible computational breaches and invasive risks could give rise to unethical conducts if there ever will be a chance for EEG helmets to send electricity to the brains.

Remember how Akihiko Kayaba (SAO’s “NerveGear” creator) removed the exit or logout function from hundreds of players who are beta testing the gaming console in Aincrad (“The World of Swords”)? One wrong and deliberate move by the Games Master himself, and many people in the SAO anime forcibly committed suicide just to escape the false reality. (In any case, if you think that isn’t scary, wait ‘till you get to the latest episodes of the anime! 😭)

Of course, SAO uses fictional universes because it is only a show; but the series reflects a scientific reality to a certain degree. Whoever controls a technology like NerveGear will have the ultimate control to wield its power and resources however bad they may be. Hence, Fulldive VR immersion has been prohibited by the government because it could potentially send its users to sleep-like death modes.

12. Artificial Intelligence (AI) and Machine Learning (ML)

Scientists already thought of AI concepts even before the 21st century began. But nowadays, we can feel it is impacting us very strongly because of the ubiquitous gadgets we have on our hands: tablets, PCs, cellphones, RPGs, and dating simulation games.

13. Pros of AI and ML

I. Human Intelligence

You have seen the speaking robots and goggles that high-tech nations have developed in the past decades, right? However, talking robots and impressive digital specs are just the bare minimum. Human intelligence takes AI and ML on another level when non-playable characters (NPCs) enter the picture.

If you mesh large sets of algorithms on NPCs through machine learning, gamers will experience a simulation they never would have expected. The reason? Well, the mechanics that game developers plugged in on the video game utilizes incredible AI!

Not only did the video game apply complex quantum algorithms through ML; rather, the game accurately predicted the user’s movements! It may be creepy at first, but this isn’t impossible with the infinite sets of quantum algorithms installed in the video game. If anything, the enemy just became smarter as if it were a breathing and real-life opponent!

To some gamers, this would be one heck of a challenge. They would be excited because their interactions with the NPCs are more engaging even though they are unpredictable. Their opponents will respond with a certain degree of realism on their conversations (and not just monologue their way out to battle.)

AR & VR have been on the move rising at breakneck speed. So, NPCs are good starting points for humanity to break through the barriers of AI and ML when reaching an SAO-level VMMORPG.

With the technology we now have, it’s not impossible to reach an incredible feat even though it would take decades. And remember, it is not just through the RPGs or the consoles we hold in our hands!

Peak AI and ML on human intelligence or NPCs can happen on the versions of VRs humanity will possess in the distant future. And that’s one thing to get excited about!

II. AI and ML Applications

There are also AI applications that could make photos move. (See myheritage.com to see more of this incredible feat.)These complex scientific applications are one of the reasons why real-life gamers can manipulate fictional playable characters.

Another example is the evolution of technology. Take the simple joystick we all know and love. While joysticks are still utilized in our generation, game consoles have also evolved! Instead of joysticks, many pro-gamers are using electronic gauntlets because these sophisticated devices use AI and ML to sense and track a person’s hand movements through the human nervous system.

In many gaming platforms, the player’s task is to simply step, stand, or walk on a specified area, and voila! They’ve already stepped into a world of wonder (and that’s just using AI, ML, and human intelligence)!

As for other senses, I know what you’re thinking. VR shouldn’t just be limited to hearing or vision. It should also make use of our olfactory senses and tactile responses! For scent, there’s Linus Tech Tips’ Smell-O-Vision wheregamers smell these special scents so they can be more integrated into the VR applications. (Follow the external link onLinus Tech Tips’ Smell-O-Vision on the Sources section below if you’d like to know more about this.)

For tactile, well, it gets more complicated because of the electrodes that either must be placed on your scalp or invade (but damage) your brain cells (read “What are EEGs?” section above if you’d like to find out more!).

Game developers profit from AI so well because they harness its powers. They tap into gamers’ personal, emotional, and mental connections through the gaming system’s statistics.

Once the data is retrieved and the connections between players and the games themselves are identified and tracked, developers will reuse and recycle this formula or habit and promote “new games” so they can sell them to amateur and pro-gamers alike.

In other words, the cycle of increased sales and profits will continue climbing, making the developers richer while the VR companies enlarge their market niche.

The AI of the world we’re living in right now may not give us that full SAO experience. We might not be able to immerse ourselves yet into Fulldive like SAO’s NerveGear yet. However, scientists and researchers were still able to come up with interesting concepts and devices like the ones above that could be stepping-stones to an SAO-themed VMMORPG!

III. Digital Eyes

Our eyes are one of two keys that would entice gamers into believing they enter fantasy lands and are now talking to NPCs. (Second key would be hearing). In reality, they are only in a spatial room wearing their VR helmets but are only slightly unaware of their surroundings. The less we use of touch senses, the more immersed gamers can be when starting in their VR.

Take this for example: “Half Life Alyx” is the one that goes in the right direction for VR games. Your consciousness would still be there of course, but it will take you a “little bit closer” to a SAO-VMMORG experience.

Other examples are “Star Trek” VRs; they will allow you to trek Aincrad as if you’re traversing in its deserts! Star Trek VR is “closer” to an SAO-VMMORPG experience because of its “realism” (unlike Blade and Sorcery, a VR which is a long, long way from allowing you to experience something as close as SAO, because it only allows you to control the virtual avatar with your thoughts).

The users are also only choosing to see, hear, feel, and emote on what their VR world is presenting to them at that time. The VR helmet transmits only certain data (images and sounds) to the brain cells of the players (making them very picky or selective of what they believe since they are only using 2 out our 5 sensors).

In SAO, the NerveGear acts as the VR helmet that enabled players to “Fulldive” into Aincrad. Our reality, more or less, allows VR players to do the same. Since neurologists are prohibited to do a brain invasion on a person, gamers aren’t allowed to experience Fulldive completely.

However, it’s enough to add “realistic” visuals and sounds (and emotions and feelings to a certain extent) when one peeks into the goggles of a VR helmet. Gamers can partially immerse themselves then and there into their fantasy lands!

14. Cons of AI and ML

I. Fake Identities

My understanding of AI may be limited to Amazon Alexa, Apple’s Siri, Google Assistant, and a car’s GPS voice recognition. But even a child can fully understand that the voices they are hearing belong to objects and not people.

Like many aspects of science, AI, ML, and human intelligence possess many flaws. One of these is using a Generative Adversarial Network (GAN) to generate fake identities. It may be cool at first; however, the truth behind GAN is simple: it synthesizes artificial imageries that are scattered across the internet to generate artificial photos (hence, “fake identities”).

If you go to https://thispersondoesnotexist.com, it will generate a picture of a person who has never existed in the real world. GAN uses machine learning to control the output (i.e., the picture) of its computations. By meshing pictures of celebrities and famous people one can find online, it will always show a picture of a fictional person you will surely never meet in real life!

Your eyes may be looking at the digital photo of a real person. But no matter how many times you refresh the page, that person does not exist.

II. Lacking Human Empathy

Don’t get me wrong. Digital assistants are incredible in their superhuman ways. When I watched SAO for the first time in 2012, I could never imagine speaking to objects and devices, let alone my very own cellphone!

However, AR & VR generates a world of fictional interactions between real-life players and robots mobilized with AI, ML, and human intelligence. Despite their magnificent capacity, they are immobilized with emotions; hence, they lack human empathy. It will definitely be awkward for human players and gamers to connect with robots at a personal level because they are programmed to follow only a few speeches and commands.

In the distant future, we may seamlessly interact with robots like the ones portrayed in the Chobits anime, or the holographic ones shown in the Avatar movie. However, ethical issues come into play again to limit what humanity can achieve through ground-breaking science. Furthermore, robots will never experience either empathy or sympathy the way human beings do.

III. Tweaking Emotions and Perceptions

Take this metaphor for example: all manner of comprehension gets evaporated into thin air when a woman sees his husband sleeping with her mistress; or when a parent sees the corpse of their child; or when you see your home burning before your eyes.

Seeing is believing, and when we see disaster strike in front of our eyes, our willpower evaporates like the morning fog that it is. It’s as if it wasn’t even there to being with.

In other words, humans are weak when they see reality crumble before their eyes. Humans are weak in these instances because they do not wish to believe what their eyes are telling them to see. It’s only after an outpour of devastated emotions will people choose to see with their hearts.

Give them time to breathe so they can process the disaster that crumbled before their very eyes. Then, if they are willing, they can generate the willpower to see with their eyes and their heart. Slowly but surely, their bottomless stamina and perseverance will get back right up again. If they choose to do so, they’ll be stronger little by little with the support of good friends.

Why the above story? Well, our brains are pre-conditioned to see the “normal”. When we see the abnormal (like the disasters I mentioned above), humans are naturally inclined to believe the opposite of what they are actually and truly seeing within the first 20 to 30 seconds.

With the power of AI and a tweaking of our feelings and emotions via safe EEG, NPCs can communicate to us within AR & VR. By that time, mankind will have reached unimaginable heights. Game developers, scientists, researchers, and technologists have already tweaked players’ perceptions and emotions through the mobile games, video apps, and VR helmets that users played steadily over time.

The above paragraph I mentioned goes beyond normal comprehension if you ask me. Breathing NPCs communicating to us in real-time? Quite the fantasy today; but that’s a possibility in the distant future because NPCs are no different than humanoid robots. They lack the five sensory senses, yet they can still follow commands because these computer programs are their “hearts”.

Robots might not be able to see, hear, touch, smell, nor feel, but accompanied with their “brains”, they will appear to players and gamers as if they are real-life humans in virtual worlds.

IV. VR & AI Crossover

When it comes to AI, ML, human intelligence, AR, and VR, the rules for “seeing is believing” are the same. But instead of an actual human being breathing in front of you, there are humanoid robots standing before you. It may be pleasing to you to look at the manmade humanoid robots. You’d even conversed with them if given the opportunity if they look “human enough”.

But there will come a point that they will look frightening to you. Your brain will tell you that it’s unnatural to talk to an object despite it being shaped and formed like a human. In this case, you’re experiencing the “uncanny valley”. Like I said, it is easy to believe what we see with our eyes, but difficult to believe with our hearts.

The more you look at a speaking robot shaped like a human, the more your brain will object to believe that you’re speaking to a living breathing soul (or a person). This is the essence of the “uncanny valley”. You may be surprised at first, but there will come a point where you will be repulsed by the humanoid robot despite what your eyes tell you.

15. Conclusion: A Perfect Combo of Fantasy and Science

Now that we have deep dived whether SAO can cross our reality, we need to answer the question: why should people care for alternate SAO universes crossing over to people like us living in real-time? For that, we need to answer what makes AR & VR enticing in amateurs and pro-gamers’ heads!

The power of SAO is to glue kids, teens, and young adults into their couches so they can finish watching several 30-minute SAO episodes. Likewise, the magnetic power of AR & VR is to never let its users go. That’s right. In essence, “Never let its users go through the power of fantasy and science!”.

Fantasy and science should contradict each other, but game developers mesh and overlap them to create alternate realities. Virtual worlds thrill gamers because it increases their excitement when they “zone in” to different entertainment mediums. What could be a better entertaining combo than players entering alternate universes (“fantasy” lands) through the technological and innovative gadgets of the scientific civilisation we’re living in right now?

At base level, its ironic to think that fantasy goes hand-in-hand with science. But this is not far from the truth when viewers saw the captivating 1st episode of SAO Season 1: alternate universes parallel real life one way or another.

Akihiko Kayaba (the creator of gaming console “NerveGear”) entrapped hundreds of gamers in Aincrad (“The World of Swords”) where beta-testers Kirito and Klein were merely playing/messing around. Likewise, video game developers and VR companies entrapped users like us (in one way or another) with their expensive AR/VR technologies, gacha games, and mobile RPGs even if we claim we’re only “innocently trying out new things” so we won’t miss out on the fun!

AR & VR pulls us, immerses us, and consumes us. Over time, its name evolved into many nicknames that gamers and big-time AR & VR developers (e.g., Unity Technologies, Iflexion, Next/Now, Nomtek, VR Vision) alternately use:

- Artificial Reality

- Cyberspace

- Holographic Reality

- Idolism

- Illusion

- Internet Life

- Make-believe

- Virtual Realm

AR & VR point us to one inescapable fact: “We’re stuck, and it’s hard to escape because we intentionally threw away our keys so we can always stay or make up excuses to never get out from these magnificent, wondrous, make-believe universes.”

They are hard to escape from because they provide us escapism from real life. That’s why scientists, researchers, and game developers will continue progressing to grab whatever opportunities the scientific future will provide the lucrative AR & VR industries.

At the heart of it all, entertainment and fun are capitalized through innovation and desires. And when scientists, researchers, technologists, mathematicians, and developers capitalize entertainment through mind-blowing equipment and machines, they create the perfect combo of fantasy and science!

16. Online Sources

17. About Sword Art Online

At the launch of the game, 10,000 people had connected themselves to the virtual reality of Sword Art Online. The plot takes a twist when people realize that they can’t quit the game.

Protagonist and pro-gamer Kirito, who was present for the beta launch of SAO, starts planning to clear levels as soon as possible. Throughout his quest to finish the game, he meets many friends and a love interest, Asuna.

The plot and the whole premise of the show are remarkably unique and quite believable in some futuristic sense!

2 Comments on Can Sword Art Online Be Real? Can It Happen In Real Life?